Lean back and let AI draft your ticket requirements

What happens if you capture an idea in 30 seconds, then let an AI agent turn it into a full implementation-ready ticket? We tried it, and this is what happened.

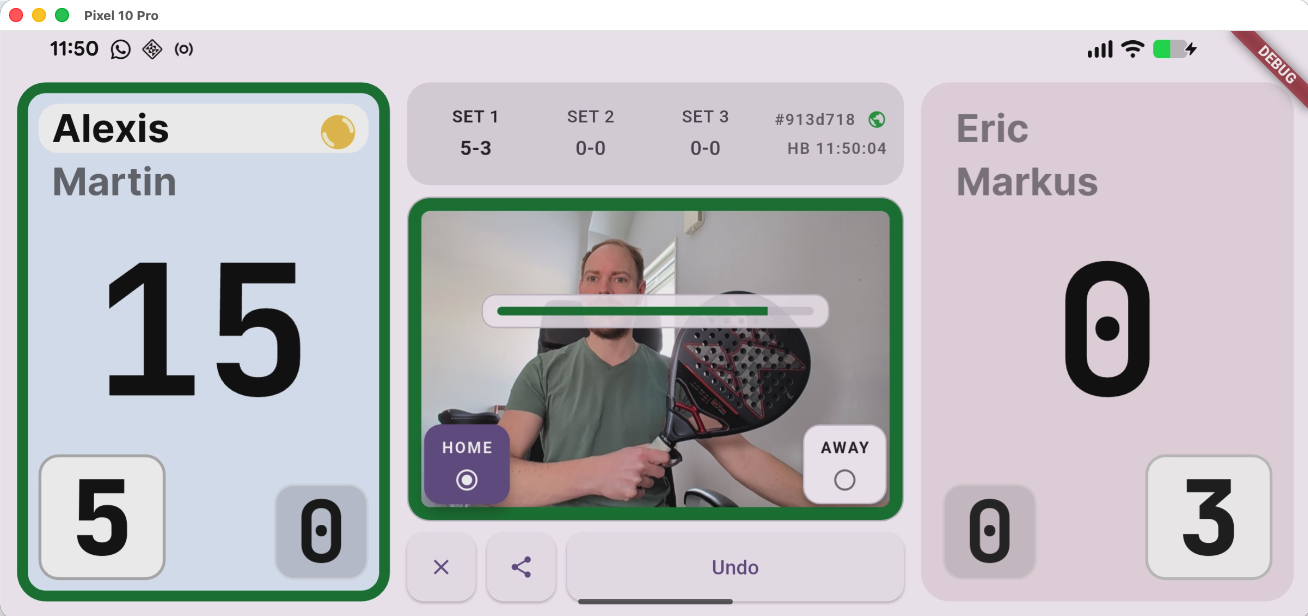

On Wednesday, we ran the first on-court trial of our "padel scoring" prototype for Android and iOS. The goal was narrow: verify the racket-detection point-scoring mechanism in real play.

Screenshot from our in-office racket detection trial run. Keep the racket in view for a brief moment, and a point is awarded to your selected team.

The app is a practical match companion: you record each point by briefly waving your racket in front of the camera. That gives you live match tracking (like any digital scoreboard), plus in-depth post-match analysis and an AI-generated match summary.

Before the first serve, we needed to agree on game-ending behavior after deuce. If you have ever played padel, you know there are two common options: Advantage or Golden Point.

One of our friends immediately suggested: "...let's use the new Star Point rule!"

I had to admit we did not support it yet, so we played with regular advantage scoring, finished the match, and moved on.

Later, that gap stuck with me.

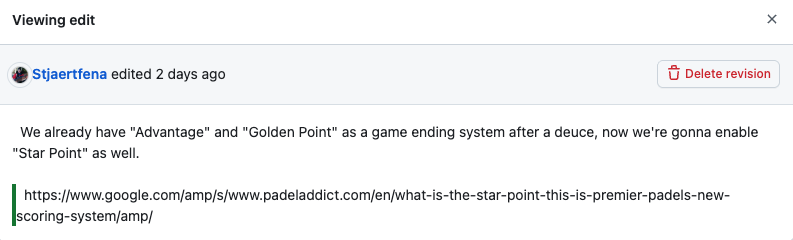

A 30-second capture from my phone

Friday evening, while watching the Olympics, I opened the GitHub mobile app and dropped in a one-line issue so the idea would not disappear:

Enable "Star point" as a game ending system

No detailed requirements. No architecture notes. Just a headline.

A minute later, I added one source link for context, the original explainer from Padel Addict: What is the star point? This is Premier Padel's new scoring system.

Final mobile issue state: still intentionally short, but now anchored by one trusted reference the agent could use to build an implementation-ready spec.

I already knew how our existing Advantage and Golden Point behavior was implemented, and both paths were covered by unit tests.

Even then, if I had implemented Star Point manually, I would have needed to thread it cleanly through scoring transitions, selectors, readout behavior, and persistence assumptions without breaking current game modes. That is exactly the kind of change that looks small in a sentence but can get subtle fast.

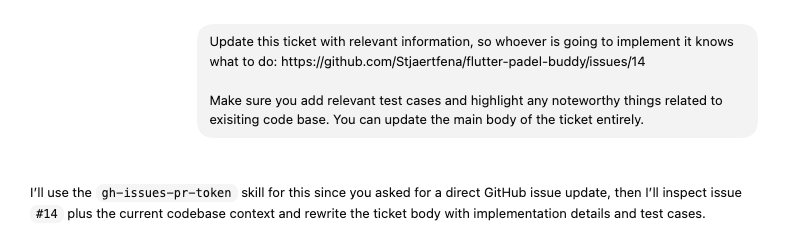

The next day, I decided to give my brief ticket directly to the agent to elaborate.

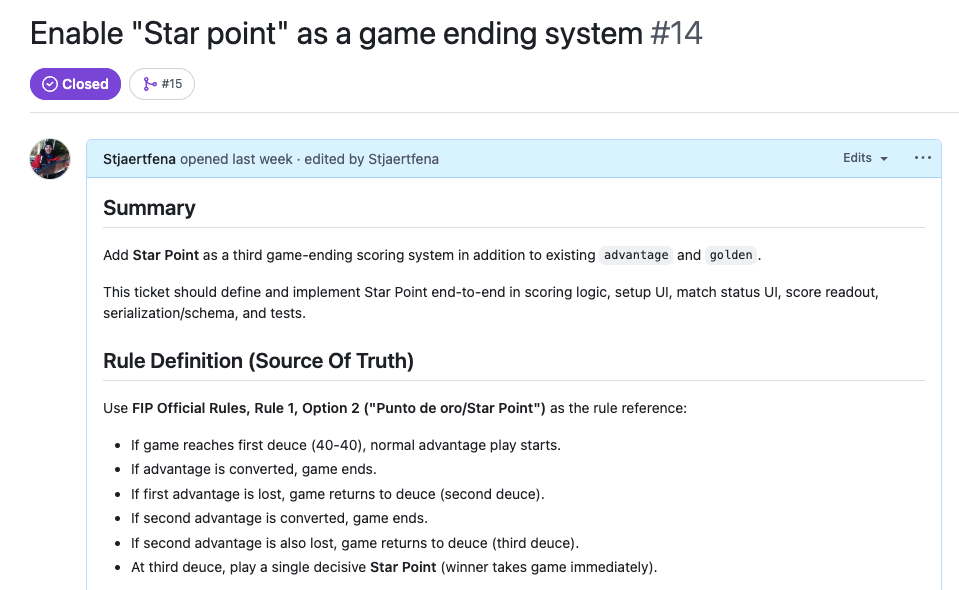

The ticket rewrite that made review easy

I asked Codex to rewrite the issue so anyone could implement it safely:

The prompt was simple: rewrite the ticket with implementation detail, tests, and codebase-aware notes.

In about 12 minutes, a lightweight note became an implementation-ready specification.

The rewritten ticket did not just add words. It provided an engineering brief I could verify quickly:

- A clear scope summary with explicit out-of-scope boundaries.

- A formal Star Point rule definition with authoritative source references.

- A codebase-aware map of likely Flutter touchpoints.

- Concrete implementation requirements, including highlighted runtime risk.

- A test and acceptance plan spanning scoring logic, selectors, readout, and persistence.

The critical part was the explicit Star Point logic breakdown. It made review dead simple: I could validate behavior and constraints instead of spending time inventing structure.

A compact Star Point logic summary from the rewritten issue, structured so each rule could be verified against expected scoring behavior.

The setup behind the speed

That ticket quality was not luck. A 30-second capture became a full implementation-ready spec in under 15 minutes through autonomous and asynchronous agent work. The setup below made that possible:

- I work in the ChatGPT Codex macOS app, so my agent can operate across multiple projects.

- I've configured a global skill with a personal access token, so my agent can create, review, and update GitHub issues autonomously.

- I capture raw ideas quickly in the GitHub mobile app.

- I've given the agent extended web access for source validation.

- My agent already has full codebase access, so requirements stay grounded in real implementation details.

From spec to merged PR

The implementation handoff was intentionally minimal:

"Implement this according to spec." (providing only the ticket url as additional input)

I gave that prompt to another agent in a fresh session. It read the ticket end-to-end and picked up implementation directly, without any prior prompting or historical context. The resulting PR covered scoring model updates, selector and UI wiring, readout changes, schema and contract updates, and tests.

For small rules-heavy changes in this app, the strongest signal was this: a fresh session could implement from spec and still ship cleanly.

The workflow we kept

For this class of change, this is currently our default loop.

- Capture the idea immediately, even if it is only a headline.

- Anchor it with one trusted reference (rule source, explainer, or spec doc).

- Ask the agent to produce implementation-ready requirements grounded in code and sources.

- Review and tighten the ticket like a technical spec (scope, risks, tests, out-of-scope).

- Implement against that spec with minimal back-and-forth.

That shifts human effort away from drafting and toward decisions, validation, and shipping.

The takeaway

I used to assume good tickets required uninterrupted desk time. In this workflow, that assumption broke. The bottleneck moved from drafting to review decisions and release quality.

Lean back, and let agents handle first-pass ticket drafting, and keep humans on constraints, trade-offs, and sign-off.

What changed for us was not coding speed, it was decision latency. Once the spec is concrete, implementation becomes interchangeable; until then, no model helps much.